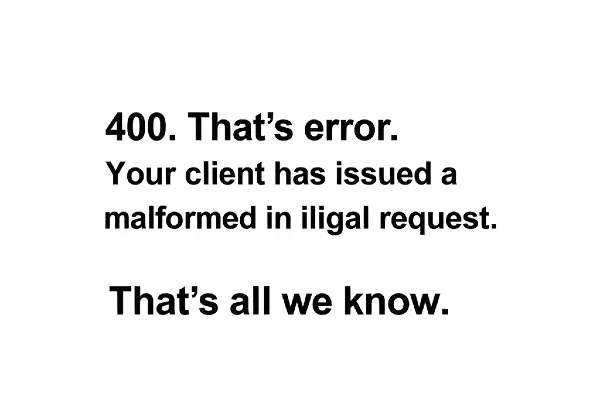

Upstream Connect Error Or Disconnect/Reset Before Headers. Reset Reason: Connection Failure

Understanding the error message “upstream connect error or disconnect/reset before headers. reset reason: connection failure” is crucial for anyone operating modern service meshes, API gateways, or reverse proxies; this article explains what the message means, why users search for it, how to diagnose it quickly, and practical fixes you can apply right away to restore reliable connectivity.

Search Intent Behind The Keyword

People searching this phrase typically want immediate root-cause diagnosis and remediation steps for Envoy- or proxy-related upstream connection failures, so this article focuses on clear, actionable guidance to identify whether the problem is network, TLS, configuration, or application-level.

What The Error Actually Means

This message means the proxy attempted to open a TCP/TLS connection to an upstream host but the connection failed or was reset before the upstream sent any HTTP headers, indicating a failure during connection establishment or immediate teardown rather than a normal HTTP error.

Primary Causes You Should Consider

The typical root causes are straightforward and repeatable, and knowing them speeds debugging:

- Upstream process is down, crashing, or not listening on expected port.

- Network path issues: firewall, routing, or NAT problems blocking the connection.

- TLS/SNI or ALPN mismatch causing handshake failures.

- Protocol mismatch (HTTP/1.x vs HTTP/2) between proxy and upstream.

- Service discovery or DNS returning wrong/old endpoints.

- Resource constraints or connection limits (circuit breakers, max connections).

Quick Diagnostic Steps You Can Run Now

Follow these targeted checks in order to rapidly isolate the layer causing the reset:

- Check proxy logs (Envoy, NGINX, HAProxy) around the timestamp for upstream connect failures.

- Curl or cURL with HTTP/2: curl -v –http2 https://upstream:port/ or curl -v http://upstream:port/ to verify reachability and protocol behavior.

- Run tcp-level checks: nc -vz upstream port, ss/netstat to verify socket listening, and tcpdump to capture SYN/RST sequences.

- Open TLS diagnostics: openssl s_client -connect host:port -servername host and inspect handshake and ALPN negotiation.

- If Kubernetes: kubectl get endpoints, kubectl describe svc, kubectl logs on pods, and verify readiness/liveness probes.

Envoy- and Proxy-Specific Checks

If you use Envoy or a sidecar proxy, confirm cluster configuration, health of endpoints, and protocol settings because Envoy emits this exact wording when connect attempts fail before headers:

- Inspect Envoy admin /clusters and /stats for upstream_cx_connect_fail and upstream_cx_total metrics.

- Verify cluster endpoints are correct and not marked unhealthy or EDS/ADS misconfigured.

- Check cluster.http2_protocol_options if upstream expects HTTP/2, or disable HTTP/2 if upstream only supports HTTP/1.1.

- Review transport_socket TLS settings for proper SNI and certificate validation behavior.

Network-Level Fixes That Often Resolve The Issue

Network problems are a common root cause; apply these fixes to remove connectivity barriers:

- Open required ports on firewalls and security groups; verify routing between proxy and upstream.

- Fix NAT or load balancer health check configurations that remove backends prematurely.

- Update DNS TTLs and confirm service discovery returns current endpoints; flush caches if needed.

Application- and Server-Level Fixes

If the server is reachable but resets occur, check these application-layer causes and remedies:

- Ensure the upstream process binds the expected interface/port and does not crash on new connections.

- Fix TLS configuration mismatches (cert chain, supported ciphers, SNI) and ensure ALPN supports HTTP/2 when required.

- Tune server thread/worker counts and accept queues to avoid immediate connection resets under load.

Configuration Tweaks And Resilience Patterns

Apply these changes to reduce recurrence and improve resilience in production environments:

- Adjust connect_timeout, retry policy, and circuit-breaker settings in the proxy to allow graceful retries and avoid cascading failures.

- Enable health checks or active probe endpoints on upstreams so the proxy can avoid sending traffic to unhealthy instances.

- Use outlier detection and load balancing policies (least_request, ring_hash) to distribute load and avoid hotspots.

Monitoring And Metrics To Watch

Instrumenting the right metrics gives early warning and context for these errors:

- Track envoy_cluster_upstream_cx_connect_fail, envoy_cluster_upstream_cx_total, and upstream_rq_5xx metrics.

- Alert on spikes in connect failures, sudden endpoint count changes, or increased TCP RST rates from tcpdump.

- Correlate proxy metrics with application logs and infrastructure events for root cause linkage.

When To Escalate To Infrastructure Or Vendor Support

Escalate when the issue persists after basic checks or points to underlying infrastructure problems that you cannot change:

- Persistent network path failures across multiple hosts or zones indicate load balancer, cloud network, or ISP issues.

- TLS handshakes failing at scale may require certificate reissuance or vendor support for ALPN/cipher compatibility.

- Opaque proxy or service mesh behavior after configuration review should be escalated to product or vendor support with logs and captures.

Preventive Practices You Should Adopt

Prevent recurrence by adopting operational best practices that professionals rely on:

- Implement readiness probes and graceful shutdown to avoid routing traffic to non-ready pods or services.

- Run synthetic health checks and chaos testing to validate behavior against transient failures.

- Automate configuration validation, and include canary rollouts with observability before wide releases.

Conclusion

You’ve now got a concise, actionable map for diagnosing and fixing “upstream connect error or disconnect/reset before headers. reset reason: connection failure”: start with logs and simple TCP/TLS checks, validate proxy and upstream configuration, fix network or TLS mismatches, then harden systems with timeouts, retries, and health checks to prevent recurrence — those steps will get your services healthy fast and keep them resilient.